In a recent TechCrunch feature, our portfolio company, Niv-AI exits stealth with $12 million in seed funding, aiming to solve one of AI infrastructure’s growing constraints: inefficient power usage in data centers.

What problem is Niv-AI addressing in AI infrastructure?

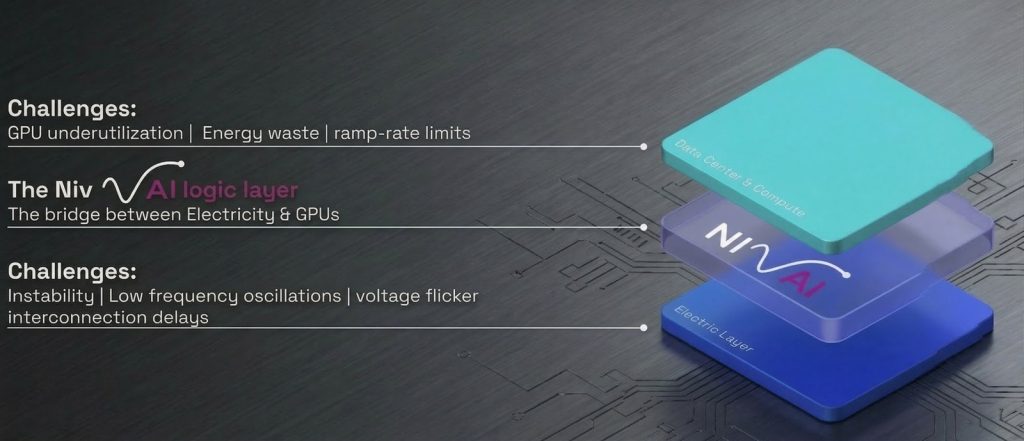

AI systems are increasingly limited not just by compute, but by electricity. Data centers struggle to manage rapid, millisecond-scale power surges from GPUs, forcing them to either over-provision energy or throttle usage by up to 30%.

This leads to significant inefficiencies, where unused power directly translates into lost revenue.

How does Niv-AI’s technology solve this challenge?

Niv-AI is building an “intelligence layer” between data centers and the power grid, using sensors to precisely measure GPU power consumption and optimize it in real time. The goal is to better match energy usage with demand, reducing waste and improving overall system efficiency.

Why is this becoming critical now?

As hyperscalers scale AI infrastructure, they face increasing constraints around energy availability, land use, and grid integration. Current approaches – such as energy storage or throttling – reduce returns on expensive GPU investments and are not sustainable at scale.

What is Grove Ventures’ perspective on this shift?

Lior Handelsman highlights that the current model for building data centers is no longer viable:

“We just can’t continue building data centers the way we build them now.”

The growing gap between AI compute demand and energy infrastructure is creating a new category of innovation focused on power efficiency.

What is the broader insight?

Electricity is becoming a core bottleneck – and opportunity – in AI.

As AI scales, optimizing power usage may be as critical as improving compute itself, opening the door for new infrastructure layers that manage energy as a first-class resource.

Read the full article here.